Our unstructured data miner leverages your business and domain-specific language to pinpoint the high-value text data you need for applications in AI, LLMs, ML, RPA, BI, Talent, Research, BAU, and more. In “DataScava: How It Pinpoints Unstructured Text Data Using Your Business Language,” we explain how it helps people to unlock the full potential of their data by defining the abstract topics and themes that represent their own business and subject matter expertise, applying both to big data sets in real time. And keeps the Human in Command in the era of AI and advanced analytics.

Articles by Scott Spangler

DataScava commissioned a series of six articles from Scott Spangler, former IBM Watson Health Researcher, Chief Data Scientist, and author of the book “Mining the Talk: Unlocking the Business Value in Unstructured Information.” Scott discusses how and why DataScava’s patented precise approach to mining unstructured text data perfectly complements real-world big data applications in AI, LLMs, ML, RPA, BI, Research, Talent, and BAU applications. He also contrasts our Tailored Topics Taxonomies, Domain-Specific Language Processing, and Weighted Topic Scoring methodologies with standard approaches such as NLP.

“Machines in the Conversation: The Case for a More Data-Centric AI,” published in CDO Magazine, excerpted from a longer article with product information.

“Executive Q&A: DataScava, AI and ML”

“The Key Ingredients for Game-Changing Business Intelligence (BI) from Unstructured Textual Data”

“Consistent High-Quality Robot Process Automation (RPA) Requires Deep Customer Understanding”

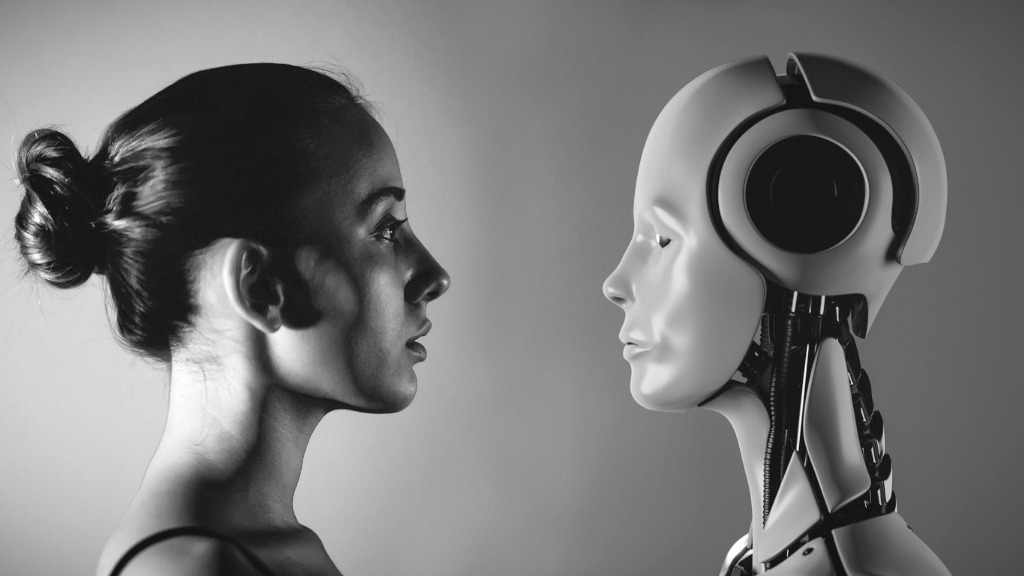

“Who’s in Charge of Your Business: The Humans or the Machines?”

Scott’s first article discusses:

- The pitfalls of using a fully automated approach to critical decision-making.

- The desirability of having a parallel human-machine partnership that regulates and monitors the inputs and outputs of automated approaches.

- The three basic ingredients that are needed to make that hybrid process successful and how DataScava implements each of these components.

Here’s an excerpt:

“Algorithms will be more effective in the long run if they are part of a more holistic framework that includes user-controlled domain-specific ontologies, statistical analysis, and rule-based reasoning strategies. These are the basic ingredients that a tool like DataScava provides.”

DataScava . . .

“Is a robot ally in humanity’s struggle for control of how we utilize big data to make decisions. By providing tools for defining the key underlying topics and rules that govern important concepts of the business needs, it evens the playing field so that machine learning no longer has to have the final say on critical business decisions.

Can supervise the process based on human-provided expertise and determine which data to use for training and which to avoid, as well as in which situations to trust deep learning decisions and when to fall back on more rule-based approaches. Such processes put the humans back in charge and allow the machines to serve their intended role as adjuncts and trusted advisors.

In partnership with a trained human mind – can act effectively as a tool for giving the left brain an equal say in big data decision-making tasks.

Can play a leading role in helping businesses manage and maintain their big data more efficiently using information ontologies, statistics with visualization and rule-based approaches.

Perfectly complements existing approaches to unlocking the value of unstructured text data – by helping companies to model higher-level intents and purposes behind the labeling and classification of data – by defining the abstract topics and themes that represent their own business and subject matter expertise – and by applying both to big data sets real-time.

Provides a practical, easy-to-use tool-set for defining the critical business ontologies that provide the critical bridge between unstructured text data analysis using standard data science techniques and the human expertise that gives your business its competitive edge.

When a deep learning system and DataScava agree on a classification, that’s ideal because then we now have a plausible explanation for why the deep learning algorithm decided the way it did.

Can help data professionals and business people use machine and human intelligence together to make their messy unstructured text data more accessible, understandable and actionable.”